Home – The Conversation |

- We're creating 'humanized pigs' in our ultraclean lab to study human illnesses and treatments

- Polen puede aumentar el riesgo de contraer COVID-19, ya sea que tengas alergias o no, según estudio

- A nutrition report card for Americans: Dark clouds, silver linings

- Astrocyte cells in the fruit fly brain are an on-off switch that controls when neurons can change and grow

- How many states and provinces are in the world?

- Northern Ireland, born of strife 100 years ago, again erupts in political violence

- Derek Chauvin trial: 3 questions America needs to ask about seeking racial justice in a court of law

- Write ill of the dead? Obits rarely cross that taboo as they look for the positive in people's lives

- What inspired digital nomads to flee America's big cities may spur legions of remote workers to do the same

- MLB's decision to drop Atlanta highlights the economic power companies can wield over lawmakers – when they choose to

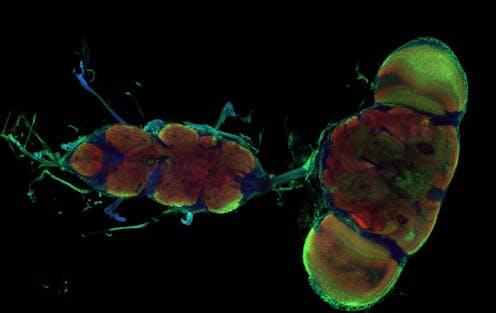

| We're creating 'humanized pigs' in our ultraclean lab to study human illnesses and treatments Posted: 12 Apr 2021 12:18 PM PDT  The U.S. Food and Drug Administration requires all new medicines to be tested in animals before use in people. Pigs make better medical research subjects than mice, because they are closer to humans in size, physiology and genetic makeup. In recent years, our team at Iowa State University has found a way to make pigs an even closer stand-in for humans. We have successfully transferred components of the human immune system into pigs that lack a functional immune system. This breakthrough has the potential to accelerate medical research in many areas, including virus and vaccine research, as well as cancer and stem cell therapeutics. Existing biomedical modelsSevere Combined Immunodeficiency, or SCID, is a genetic condition that causes impaired development of the immune system. People can develop SCID, as dramatized in the 1976 movie "The Boy in the Plastic Bubble." Other animals can develop SCID, too, including mice. Researchers in the 1980s recognized that SCID mice could be implanted with human immune cells for further study. Such mice are called "humanized" mice and have been optimized over the past 30 years to study many questions relevant to human health.  Mice are the most commonly used animal in biomedical research, but results from mice often do not translate well to human responses, thanks to differences in metabolism, size and divergent cell functions compared with people. Nonhuman primates are also used for medical research and are certainly closer stand-ins for humans. But using them for this purpose raises numerous ethical considerations. With these concerns in mind, the National Institutes of Health retired most of its chimpanzees from biomedical research in 2013. Alternative animal models are in demand. Swine are a viable option for medical research because of their similarities to humans. And with their widespread commercial use, pigs are met with fewer ethical dilemmas than primates. Upwards of 100 million hogs are slaughtered each year for food in the U.S. Humanizing pigsIn 2012, groups at Iowa State University and Kansas State University, including Jack Dekkers, an expert in animal breeding and genetics, and Raymond Rowland, a specialist in animal diseases, serendipitously discovered a naturally occurring genetic mutation in pigs that caused SCID. We wondered if we could develop these pigs to create a new biomedical model. Our group has worked for nearly a decade developing and optimizing SCID pigs for applications in biomedical research. In 2018, we achieved a twofold milestone when working with animal physiologist Jason Ross and his lab. Together we developed a more immunocompromised pig than the original SCID pig – and successfully humanized it, by transferring cultured human immune stem cells into the livers of developing piglets. During early fetal development, immune cells develop within the liver, providing an opportunity to introduce human cells. We inject human immune stem cells into fetal pig livers using ultrasound imaging as a guide. As the pig fetus develops, the injected human immune stem cells begin to differentiate – or change into other kinds of cells – and spread through the pig's body. Once SCID piglets are born, we can detect human immune cells in their blood, liver, spleen and thymus gland. This humanization is what makes them so valuable for testing new medical treatments. We have found that human ovarian tumors survive and grow in SCID pigs, giving us an opportunity to study ovarian cancer in a new way. Similarly, because human skin survives on SCID pigs, scientists may be able to develop new treatments for skin burns. Other research possibilities are numerous.  Pigs in a bubbleSince our pigs lack essential components of their immune system, they are extremely susceptible to infection and require special housing to help reduce exposure to pathogens. SCID pigs are raised in bubble biocontainment facilities. Positive pressure rooms, which maintain a higher air pressure than the surrounding environment to keep pathogens out, are coupled with highly filtered air and water. All personnel are required to wear full personal protective equipment. We typically have anywhere from two to 15 SCID pigs and breeding animals at a given time. (Our breeding animals do not have SCID, but they are genetic carriers of the mutation, so their offspring may have SCID.) [Deep knowledge, daily. Sign up for The Conversation's newsletter.] As with any animal research, ethical considerations are always front and center. All our protocols are approved by Iowa State University's Institutional Animal Care and Use Committee and are in accordance with The National Institutes of Health's Guide for the Care and Use of Laboratory Animals. Every day, twice a day, our pigs are checked by expert caretakers who monitor their health status and provide engagement. We have veterinarians on call. If any pigs fall ill, and drug or antibiotic intervention does not improve their condition, the animals are humanely euthanized. Our goal is to continue optimizing our humanized SCID pigs so they can be more readily available for stem cell therapy testing, as well as research in other areas, including cancer. We hope the development of the SCID pig model will pave the way for advancements in therapeutic testing, with the long-term goal of improving human patient outcomes. Adeline Boettcher earned her research-based Ph.D. working on the SCID project in 2019. Christopher Tuggle receives funding from the US National Institutes of Health to develop the SCID pig model. The Artemis SCID pig model has been patented by Iowa State University (#9,745,561) and can be licensed for use. Adeline Boettcher does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment. |

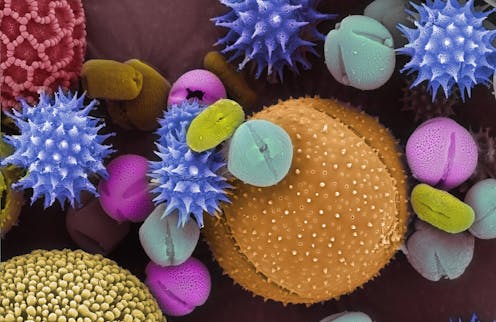

| Polen puede aumentar el riesgo de contraer COVID-19, ya sea que tengas alergias o no, según estudio Posted: 12 Apr 2021 10:42 AM PDT  La exposición al polen puede aumentar el riesgo de desarrollar COVID-19, y no es solo un problema para las personas con alergias, muestra una nueva investigación. El fisiólogo vegetal Lewis Ziska, coautor del nuevo estudio revisado por pares y de otra investigación reciente sobre el polen y el cambio climático, explica los hallazgos y explica por qué las temporadas de polen son cada vez más largas e intensas. ¿Qué tiene que ver el polen con un virus?La conclusión más importante de nuestro nuevo estudio es que el polen puede ser un factor en la exacerbación de COVID-19. Hace un par de años, mis coautores demostraron que el polen puede suprimir la forma en que el sistema inmunológico humano responde a los virus. Al interferir con las proteínas que señalan las respuestas antivirales en las células que recubren las vías respiratorias, puede hacer que las personas sean más susceptibles a una gran cantidad de virus respiratorios, como el virus de la gripe y otros virus del SARS. En este estudio, analizamos específicamente COVID-19. Queríamos ver cómo cambiaba el número de nuevas infecciones con el aumento y la caída de los niveles de polen en 31 países de todo el mundo. Descubrimos que, en promedio, alrededor del 44 por ciento de la variabilidad en las tasas de casos de COVID-19 estaba relacionada con la exposición al polen, a menudo en sinergia con la humedad y la temperatura. Las tasas de infección tendieron a aumentar cuatro días después de un alto recuento de polen. Si no hubo bloqueo local, la tasa de infección aumentó en un promedio de aproximadamente 4 por ciento por 100 granos de polen en un metro cúbico de aire. Un bloqueo estricto redujo el aumento a la mitad. Esta exposición al polen no es solo un problema para las personas con fiebre del heno. Es una reacción al polen en general. Incluso los tipos de polen que normalmente no causan reacciones alérgicas se correlacionaron con un aumento de las infecciones por COVID-19. ¿Qué precauciones puedes tomar?En los días con altos recuentos de polen, trata de permanecer adentro para limitar su exposición tanto como sea posible. Cuando estés al aire libre, usa una máscara durante la temporada de polen. Los granos de polen son lo suficientemente grandes como para que casi cualquier máscara diseñada para alergias funcione para mantenerlos fuera. Sin embargo, si estornudas y toses, use una máscara que sea eficaz contra el coronavirus. Si es asintomático con COVID-19, todos esos estornudos aumentan sus posibilidades de propagar el virus. Los casos leves de COVID-19 también pueden confundirse con alergias. ¿Por qué la temporada de polen dura más?A medida que cambia el clima, vemos tres cosas que se relacionan específicamente con el polen. Uno es un comienzo más temprano de la temporada de polen. Los cambios de primavera están comenzando antes y hay señales a nivel mundial de exposición al polen más temprano en la temporada. En segundo lugar, la temporada general de polen se está alargando. El tiempo que está expuesto al polen, desde la primavera, que es impulsado principalmente por el polen de los árboles, hasta el verano, que son las malezas y los pastos, y luego el otoño, que es principalmente ambrosía, es aproximadamente 20 días más largo en América del Norte ahora que fue en 1990. A medida que avanza hacia los polos, donde las temperaturas aumentan más rápidamente, descubrimos que la temporada se está volviendo aún más pronunciada. En tercer lugar, se está produciendo más polen. Colegas y yo describimos los tres cambios en un artículo publicado en febrero. A medida que el cambio climático impulsa el conteo de polen hacia arriba, eso podría resultar en una mayor susceptibilidad humana a los virus. Estos cambios en la temporada de polen se han producido durante varias décadas. Cuando mis colegas y yo miramos hacia atrás en tantos registros diferentes de almacenamiento de polen como pudimos localizar desde la década de 1970, encontramos evidencia sólida que sugiere que estos cambios han estado ocurriendo durante al menos los últimos 30 a 40 años. Las concentraciones de gases de efecto invernadero están aumentando y la superficie de la Tierra se está calentando, y eso afectará la vida tal como la conocemos. He estado estudiando el cambio climático durante 30 años. Es tan endémico del entorno actual que será difícil analizar cualquier problema médico sin al menos intentar comprender si el cambio climático ya lo ha afectado o va a hacerlo. Este artículo fue traducido por El Financiero. Sean Lang no recibe salario, ni ejerce labores de consultoría, ni posee acciones, ni recibe financiación de ninguna compañía u organización que pueda obtener beneficio de este artículo, y ha declarado carecer de vínculos relevantes más allá del cargo académico citado. |

| A nutrition report card for Americans: Dark clouds, silver linings Posted: 12 Apr 2021 08:18 AM PDT  Many of the latest findings on the American diet are not encouraging. Almost half of U.S. adults, or 46%, have a poor-quality diet, with too little fish, whole grains, fruits, vegetables, nuts and beans, and too much salt, sugar-sweetened beverages and processed meats. Our additional research shows U.S. kids are doing even worse: More than half, or 56%, have a poor diet. Importantly, for both adults and children, most of the dietary shortcomings were from too few healthy foods, rather than too much unhealthy foods. I am a cardiologist and professor and dean of the Friedman School of Nutrition Science and Policy at Tufts University. In a series of research papers using national data collected over the past 20 years, my colleagues and I have investigated how the dietary habits of Americans have evolved. We have assessed diets among adults and children, among women and men, and by race and ethnicity, income, education and food security status. Dark clouds, silver liningsThe largest single category of foods is carbohydrate-rich: grains, cereals, starches and sugars. In the U.S., 42% of all calories consumed are carbohydrates from lower-nutritional-quality foods such as refined grains and cereals, added sugars and potatoes. Only 9% of calories are from higher-nutritional-quality carbohydrates, such as whole grains, fruits, legumes and non-starchy vegetables. What's more, the average American takes in nearly four 50-gram servings – or about 7 ounces – of processed meat per week. Processed meats include luncheon meats, sausage, hot dogs, ham and bacon. These products, preserved with sodium, nitrites and other additives, have strong links to stroke, heart disease, diabetes and some cancers. The news is sobering, but there are glimmers of a silver lining. Comparing trends since 1999-2000, the average American diet has actually improved over time. Back then, 56% of adults and 77% of kids had poor diets. Since then, both kids and adults have increased whole grain intake. Both have also cut back on sugar-sweetened beverages – kids by half, from two daily servings to one. Adults have also modestly increased consumption of nuts, seeds and legumes; and kids, of fruits and vegetables. Intakes of unprocessed red meats declined by about half a serving per week, replaced by poultry. Intakes of fish and processed meat did not appreciably change. But these improvements are not equitably distributed. Comparing different races and ethnicities, or income and education levels, disparities remain. In many cases, they have widened over time. Our most recent data shows 44% of Black adults have poor-quality diets, compared with 31% of whites. Of kids whose most educated parent has a high school degree, nearly two-thirds – 63% – have a poor diet; for kids with at least one parent with a college degree, it's 43%. In our most recent, and perhaps our most compelling, research, we've evaluated nutritional quality of the American diet according to the food source: grocery stores, restaurants, schools, work sites and other venues. We found that foods eaten from fast-food or fast-casual restaurants offered the worst nutrition – 85% of foods eaten by children at these establishments, and 70% by adults, were of poor quality. At full-service restaurants and work-site cafeterias, about half the foods eaten were of poor quality. At grocery stores, we found some improvement from 2003 to 2018. The percentage of poor-quality foods eaten from grocery stores dropped from 40% to 33% for adults, and 53% to 45% for children. But the largest improvements from 2003 to 2018 happened at schools. The proportion of poor-quality foods eaten from school was cut by more than half, from 56% to 24%. Nearly all this occurred after 2010, with the passage of the federal Healthy Hunger-Free Kids Act, which created much stronger nutrition standards for schools and early child care. Improvements we found included higher intakes of whole grains, fruits, greens and beans, and fewer sugar-sweetened beverages, refined grains and added sugars – all targets of the legislation. In fact, looking across U.S. food sources, we found that schools have become the top overall source of nutritious eating in the country. As the U.S. slowly recovers from the pandemic, these results amplify the importance of reopening schools, and providing school meals, to ensure nutritious eating for kids. Suggestions for changeBoth COVID-19 and the country's awakening on systemic racism have raised the national consciousness on the fragmented, fragile and inequitable nature of its food system. This makes our findings of racial and ethnic nutritional disparities even more dire. To achieve true nutrition security, we need a series of policy actions and business innovations to shift our food system toward health, equity and sustainability. These include promoting food as medicine by integrating nutrition into health care and food assistance programs; by creating a National Institute of Nutrition and new public-private partnerships to accelerate science, innovation and entrepreneurship; and by creating a new Office of the National Director of Food and Nutrition to coordinate the currently fragmented US$150 billion annual federal investments in diverse food and nutrition areas. Policy change works, as clearly demonstrated by the dramatic impact of a single policy change – the 2010 Healthy, Hunger-Free Kids Act – on the nutrition of millions of American children. It's time to grasp this unique moment in the country's history and reimagine U.S. national food policy to create a nourishing and sustainable food system for all. Dr. Mozaffarian reports research funding from the National Institutes of Health, the Gates Foundation, and the Rockefeller Foundation; personal fees from Acasti Pharma, America's Test Kitchen, Barilla, Cleveland Clinic Foundation, Danone, GOED, and Motif FoodWorks; scientific advisory board, Beren Therapeutics, Brightseed, Calibrate, DayTwo (ended 6/20), Elysium Health, Filtricine, Foodome, HumanCo, January Inc., Season, and Tiny Organics; and chapter royalties from UpToDate. |

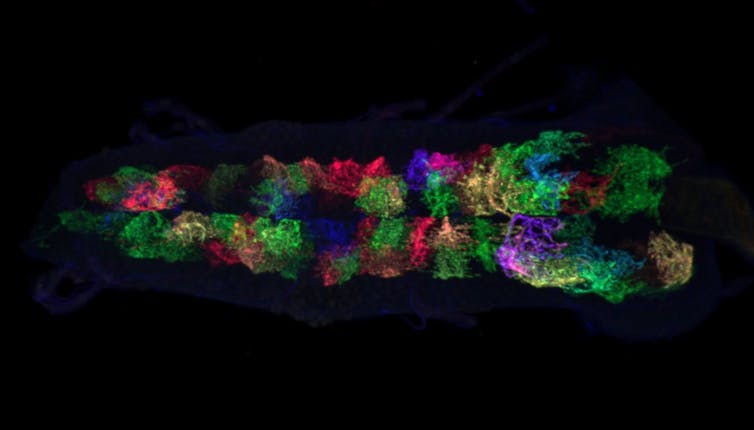

| Posted: 12 Apr 2021 05:28 AM PDT  The Research Brief is a short take about interesting academic work. The big ideaNeuroplasticity – the ability of neurons to change their structure and function in response to experiences – can be turned off and on by the cells that surround neurons in the brain, according to a new study on fruit flies that I co-authored. As fruit fly larvae age, their neurons shift from a highly adaptable state to a stable state and lose their ability to change. During this process, support cells in the brain – called astrocytes – envelop the parts of the neurons that send and receive electrical information. When my team removed the astrocytes, the neurons in the fruit fly larvae remained plastic longer, hinting that somehow astrocytes suppress a neuron's ability to change. We then discovered two specific proteins that regulate neuroplasticity.  Why it mattersThe human brain is made up of billions of neurons that form complex connections with one another. Flexibility at these connections is a major driver of learning and memory, but things can go wrong if it isn't tightly regulated. For example, in people, too much plasticity at the wrong time is linked to brain disorders such as epilepsy and Alzheimer's disease. Additionally, reduced levels of the two neuroplasticity-controlling proteins we identified are linked to increased susceptibility to autism and schizophrenia. Similarly, in our fruit flies, removing the cellular brakes on plasticity permanently impaired their crawling behavior. While fruit flies are of course different from humans, their brains work in very similar ways to the human brain and can offer valuable insight. One obvious benefit of discovering the effect of these proteins is the potential to treat some neurological diseases. But since a neuron's flexibility is closely tied to learning and memory, in theory, researchers might be able to boost plasticity in a controlled way to enhance cognition in adults. This could, for example, allow people to more easily learn a new language or musical instrument.  How we did the workMy colleagues and I focused our experiments on a specific type of neurons called motor neurons. These control movements like crawling and flying in fruit flies. To figure out how astrocytes controlled neuroplasticity, we used genetic tools to turn off specific proteins in the astrocytes one by one and then measured the effect on motor neuron structure. We found that astrocytes and motor neurons communicate with one another using a specific pair of proteins called neuroligins and neurexins. These proteins essentially function as an off button for motor neuron plasticity. What still isn't knownMy team discovered that two proteins can control neuroplasticity, but we don't know how these cues from astrocytes cause neurons to lose their ability to change. Additionally, researchers still know very little about why neuroplasticity is so strong in younger animals and relatively weak in adulthood. In our study, we showed that prolonging plasticity beyond development can sometimes be harmful to behavior, but we don't yet know why that is, either. What's nextI want to explore why longer periods of neuroplasticity can be harmful. Fruit flies are great study organisms for this research because it is very easy to modify the neural connections in their brains. In my team's next project, we hope to determine how changes in neuroplasticity during development can lead to long–term changes in behavior. There is so much more work to be done, but our research is a first step toward treatments that use astrocytes to influence how neurons change in the mature brain. If researchers can understand the basic mechanisms that control neuroplasticity, they will be one step closer to developing therapies to treat a variety of neurological disorders. [Understand new developments in science, health and technology, each week. Subscribe to The Conversation's science newsletter.] Sarah DeGenova Ackerman receives funding from the NIH/NINDS. Sarah DeGenova Ackerman is a Milton Safenowitz postdoctoral fellow of the ALS Association. |

| How many states and provinces are in the world? Posted: 12 Apr 2021 05:27 AM PDT   Curious Kids is a series for children of all ages. If you have a question you'd like an expert to answer, send it to curiouskidsus@theconversation.com.

The exact answer is hard to come by – for now. Your question has actually sparked scholars to start talking about compiling an official, authoritative database. Right now the best estimates land somewhere between 3,600 and 5,200, across the world's roughly 200 nations. It depends on whether you collect data from specific nations' own information, the CIA World Factbook or the International Standards Organization. There are 195 national governments recognized by the United Nations, but there are as many as nine other places with nationlike governments, including Taiwan and Kosovo, though they are not recognized by the U.N. Most of these countries are divided into smaller sections, the way the U.S. is broken up into 50 states along with territories, like Puerto Rico and Guam, and a federal district, Washington, D.C. They are not all called "states," though: Switzerland has cantons, Bangladesh has divisions, Cameroon has regions, Germany has lander, Jordan has governorates, Montserrat has parishes, Zambia has provinces, and Japan has prefectures – among many other names. Most countries have some type of major subdivision – even tiny Andorra, tucked in the Pyrenees Mountains between France and Spain, has seven parishes. Slovenia has the most, with 212: 201 municipalities, called "obcine" in Slovenian, and 11 urban municipalities, called "mestne obcine." Singapore, Monaco and Vatican City, all small city-states, are the three nations that have what are called "unitary" governments that are not divided into smaller sections. Dividing governing power between national and subnational levels is called "federalism." It lets countries organize large areas of land and large numbers of people, handling different interests of diverse groups, often with different languages, religions and ethnic identities. National governments still control international relations, military power and money and banking systems – things that affect everyone in a country equally. But states, provinces, cantons and the like let more local government groups have some amount of say over health care, education, policing and other issues where needs can vary substantially from one area to another. Variations in laws and regulations benefit people in a couple of different ways. First, people can leave one area and move to another that has laws or policies that are more to their liking. In addition, different regions can try different approaches to solving particular problems – like educating all children or providing health care in rural areas, perhaps identifying which methods are more effective. Federal systems also make it easier for citizens to join government by running for office, including challenging the current officeholders. It is much cheaper and less complicated to seek support from voters in a smaller area. Smaller government agencies can also make better use of local knowledge about geography or historical traditions to govern people in ways that fit their needs.  There are some drawbacks, too: Some regions may have laws and rules that expand business opportunities or protect the environment – while other regions may have fewer business regulations or more damaged landscapes. Problems like that can mean people who live near one another – but in different states – have unequal qualities of life. And sometimes provincial governments can slow the progress of major national initiatives meant to benefit everyone. But most countries seem to have decided that the positives outweigh the negatives. And in fact, they've gone even deeper into federalism. Beyond states and provinces, there are many even smaller units of government. In the U.S., states are made up of counties, which are in turn made up of towns, cities or other municipal governments. There are many more thousands of these – the Database of Global Administrative Areas tallies 386,735. Brazil alone has 5,570 municipalities. India has 250,671 village councils, called "gram panchayats." But even those are divided into smaller districts called "wards," each of which votes for its own council member. If you want some more fun, try looking for the flags of each of these subnational governments! Hello, curious kids! Do you have a question you'd like an expert to answer? Ask an adult to send your question to CuriousKidsUS@theconversation.com. Please tell us your name, age and the city where you live. And since curiosity has no age limit – adults, let us know what you're wondering, too. We won't be able to answer every question, but we will do our best. Vasabjit Banerjee does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment. |

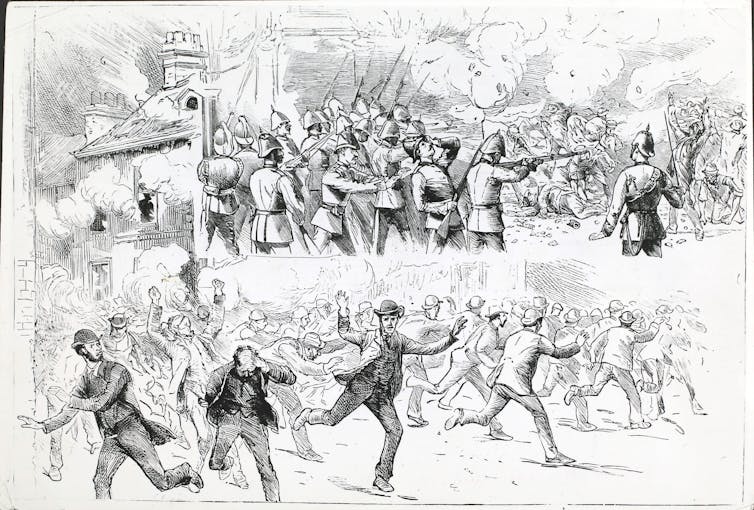

| Northern Ireland, born of strife 100 years ago, again erupts in political violence Posted: 12 Apr 2021 05:27 AM PDT  Sectarian rioting has returned to the streets of Northern Ireland, just weeks shy of its 100th anniversary as a territory of the United Kingdom. For several nights, young protesters loyal to British rule – fueled by anger over Brexit, policing and a sense of alienation from the U.K. – set fires across the capital of Belfast and clashed with police. Scores have been injured. U.K. Prime Minister Boris Johnson, calling for calm, said "the way to resolve differences is through dialogue, not violence or criminality." But Northern Ireland was born of violence. Deep divisions between two identity groups – broadly defined as Protestant and Catholic – have dominated the country since its very founding. Now, roiled anew by the impact of Brexit, Northern Ireland is seemingly moving in a darker and more dangerous direction. Colonization of IrelandThe island of Ireland, whose northernmost part lies a mere 13 miles from Britain, has been contested territory for at least nine centuries. Britain long gazed with colonial ambitions on its smaller Catholic neighbor. The 12th-century Anglo-Norman invasion first brought the neighboring English to Ireland. In the late 16th century, frustrated by continuing native Irish resistance, Protestant England implemented an aggressive plan to fully colonize Ireland and stamp out Irish Catholicism. Known as "plantations," this social engineering exercise "planted" strategic areas of Ireland with tens of thousands of English and Scottish Protestants. Plantations offered settlers cheap woodland and bountiful fisheries. In exchange, Britain established a base loyal to the British crown – not to the Pope. England's most ambitious plantation strategy was carried out in Ulster, the northernmost of Ireland's provinces. By 1630, according to the Ulster Historical Foundation, there were about 40,000 English-speaking Protestant settlers in Ulster. Though displaced, the native Irish Catholic population of Ulster was not converted to Protestantism. Instead, two divided and antagonistic communities – each with its own culture, language, political allegiances, religious beliefs and economic histories – shared one region. Whose Ireland is it?Over the next two centuries, Ulster's identity divide transformed into a political fight over the future of Ireland. "Unionists" – most often Protestant – wanted Ireland to remain part of the United Kingdom. "Nationalists" – most often Catholic – wanted self-government for Ireland. These fights played out in political debates, the media, sports, pubs – and, often, in street violence.  By the early 1900s, a movement of Irish independence was rising in the south of Ireland. The nationwide struggle over Irish identity only intensified the strife in Ulster. The British government, hoping to appease nationalists in the south while protecting the interests of Ulster unionists in the north, proposed in 1920 to partition Ireland into two parts: one majority Catholic, the other Protestant-dominated – but both remaining within the United Kingdom. Irish nationalists in the south rejected that idea and carried on with their armed campaign to separate from Britain. Eventually, in 1922, they gained independence and became the Irish Free State, today called the Republic of Ireland. In Ulster, unionist power-holders reluctantly accepted partition as the best alternative to remaining part of Britain. In 1920, the Government of Ireland Act created Northern Ireland, the newest member of the United Kingdom. A troubled historyIn this new country, native Irish Catholics were now a minority, making up less than a third of Northern Ireland's 1.2 million people. Stung by partition, nationalists refused to recognize the British state. Catholic schoolteachers, supported by church leaders, refused to take state salaries. And when Northern Ireland seated its first parliament in May 1921, nationalist politicians did not take their elected seats in the assembly. The Parliament of Northern Ireland became, essentially, Protestant – and its pro-British leaders pursued a wide variety of anti-Catholic practices, discriminating against Catholics in public housing, voting rights and hiring. By the 1960s, Catholic nationalists in Northern Ireland were mobilizing to demand more equitable governance. In 1968, police responded violently to a peaceful march to protest inequality in the allocation of public housing in Derry, Northern Ireland's second-largest city. In 60 seconds of unforgettable television footage, the world saw water cannons and baton-wielding officers attack defenseless marchers without restraint. On Jan. 30, 1972, during another civil rights march in Derry, British soldiers opened fire on unarmed marchers, killing 14. This massacre, known as Bloody Sunday, marked a tipping point. A nonviolent movement for a more inclusive government morphed into a revolutionary campaign to overthrow that government and unify Ireland. The Irish Republican Army, a nationalist paramilitary group, used bombs, targeted assassinations and ambushes to pursue independence from Britain and reunification with Ireland.  Longstanding paramilitary groups that were aligned with pro-U.K. political forces reacted in kind. Known as loyalists, these groups colluded with state security forces to defend Northern Ireland's union with Britain. Euphemistically known as "the troubles," this violence claimed 3,532 lives from 1968 to 1998. Brexit hits hardThe troubles subsided in April 1998 when the British and Irish governments, along with major political parties in Northern Ireland, signed a landmark U.S.-brokered peace accord. The Good Friday Agreement established a power-sharing arrangement between the two sides and gave the Northern Irish parliament more authority over domestic affairs. The peace agreement made history. But Northern Ireland remained deeply fragmented by identity politics and paralyzed by dysfunctional governance, according to my research on risk and resilience in the country. Violence has periodically flared up since.  Then, in 2020, came Brexit. Britain's negotiated withdrawal from the European Union created a new border in the Irish Sea that economically moved Northern Ireland away from Britain and toward Ireland. Leveraging the instability caused by Brexit, nationalists have renewed calls for a referendum on formal Irish reunification. For unionists loyal to Britain, that represents existential threat. Young loyalists born after the height of the troubles are particularly fearful of losing a British identity that has always been theirs. Recent spasms of street disorder suggest they will defend that identity with violence, if necessary. In some neighborhoods, nationalist youths have countered with violence of their own. In its centenary year, Northern Ireland teeters on the edge of a painfully familiar precipice. [You're smart and curious about the world. So are The Conversation's authors and editors. You can read us daily by subscribing to our newsletter.] James Waller was a visiting research professor at the George J. Mitchell Institute for Global Peace, Justice and Security at Queen's University in Belfast. |

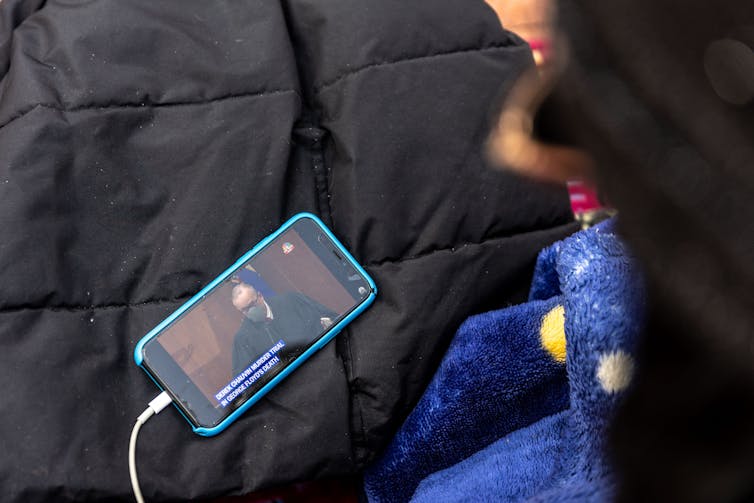

| Derek Chauvin trial: 3 questions America needs to ask about seeking racial justice in a court of law Posted: 12 Apr 2021 05:27 AM PDT  There is a difference between enforcing the law and being the law. The world is now witnessing another in a long history of struggles for racial justice in which this distinction may be ignored. Derek Chauvin, a 45-year-old white former Minneapolis police officer, is on trial for third-degree murder and second-degree manslaughter for the May 25, 2020, death of George Floyd, a 46-year-old African American man. There are three questions I find important to consider as the trial unfolds. These questions address the legal, moral and political legitimacy of any verdict in the trial. I offer them from my perspective as an Afro-Jewish philosopher and political thinker who studies oppression, justice and freedom. They also speak to the divergence between how a trial is conducted, what rules govern it – and the larger issue of racial justice raised by George Floyd's death after Derek Chauvin pressed his knee on Floyd's neck for more than nine minutes. They are questions that need to be asked: 1. Can Chauvin be judged as guilty beyond a reasonable doubt?The presumption of innocence in criminal trials is a feature of the U.S. criminal justice system. And a prosecutor must prove the defendant's guilt beyond a reasonable doubt to a jury of the defendant's peers. The history of the United States reveals, however, that these two conditions apply primarily to white citizens. Black defendants tend to be treated as guilty until proved innocent. Racism often leads to presumptions of reasonableness and good intentions when defendants and witnesses are white, and irrationality and ill intent when defendants, witnesses and even victims are black.  Additionally, race affects jury selection. The history of all-white juries for black defendants and rarely having black jurors for white ones is evidence of a presumption of white people's validity of judgment versus that of Black Americans. Doubt can be afforded to a white defendant in circumstances where it would be denied a black one. Thus, Chauvin, as white, could be granted that exculpating doubt despite the evidence shared before millions of viewers in a live-streamed trial. 2. What is the difference between force and violence?The customary questioning of police officers who harm people focuses on their use of what's called "excessive force." This presumes the legal legitimacy of using force in the first place in the specific situation. Violence, however, is the use of illegitimate force. As a result of racism, Black people are often portrayed as preemptively guilty and dangerous. It follows that the perceived threat of danger makes "force" the appropriate description when a police officer claims to be preventing violence. This understanding makes it difficult to find police officers guilty of violence. To call the act "violence" is to acknowledge that it is improper and thus falls, in the case of physical acts of violence, under the purview of criminal law. Once their use of force is presumed legitimate, the question of degree makes it nearly impossible for jurors to find officers guilty. Floyd, who was suspected of purchasing items from a store with a counterfeit $20 bill, was handcuffed and complained of not being able to breathe when Chauvin pulled him from the police vehicle and he fell face down on the ground. Footage from the incident revealed that Chauvin pressed his knee on Floyd's neck for nine minutes and 29 seconds. Floyd was motionless several minutes in, and he had no pulse when Alexander Kueng, one of the officers, checked. Chauvin didn't remove his knee until paramedics arrived and asked him to get off of Floyd so they could examine the motionless patient. If force under the circumstances is unwarranted, then its use would constitute violence in both legal and moral senses. Where force is legitimate (for example, to prevent violence) but things go wrong, the presumption is that a mistake, instead of intentional wrongdoing, occurred. An important, related distinction is between justification and excuse. Violence, if the action is illegitimate, is not justified. Force, however, when justified, can become excessive. The question at that point is whether a reasonable person could understand the excess. That understanding makes the action morally excusable.  3. Is there ever excusable police violence?Police are allowed to use force to prevent violence. But at what point does the force become violence? When its use is illegitimate. In U.S. law, the force is illegitimate when done "in the course of committing an offense." Sgt. David Pleoger, Chauvin's former supervisor, stated in the trial: "When Mr. Floyd was no longer offering up any resistance to the officers, they could have ended their restraint." Minneapolis Police Chief Medaria Arradondo testified, "To continue to apply that level of force to a person proned-out, handcuffed behind their back, that in no way, shape or form is anything that is by policy." He declared, "I vehemently disagree that that was an appropriate use of force." That an act was deemed by prosecutors to be violent, defined as an illegitimate use of force resulting in death, is a necessary conclusion for charges of murder and manslaughter. Both require ill intent or, in legal terms, a mens rea ("evil mind"). The absence of a reasonable excuse affects the legal interpretation of the act. That the act was not preventing violence but was, instead, one of committing it, made the action inexcusable. The Chauvin case, like so many others, leads to the question: What is the difference between enforcing the law and imagining being the law? Enforcing the law means one is acting within the law. That makes the action legitimate. Being the law forces others, even law-abiding people, below the enforcer, subject to their actions. If no one is equal to or above the enforcer, then the enforcer is raised above the law. Such people would be accountable only to themselves. Police officers and any state officials who believe they are the law, versus implementers or enforcers of the law, place themselves above the law. Legal justice requires pulling such officials back under the jurisdiction of law. The purpose of a trial is, in principle, to subject the accused to the law instead of placing him, her, or them above it. Where the accused is placed above the law, there is an unjust system of justice. [Deep knowledge, daily. Sign up for The Conversation's newsletter.] Lewis R. Gordon does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment. |

| Write ill of the dead? Obits rarely cross that taboo as they look for the positive in people's lives Posted: 12 Apr 2021 05:26 AM PDT  Capturing a life accurately and sympathetically is a challenge, more so if it is one that lasts nearly a century. So when a notable person like the Duke of Edinburgh dies, obituary writers face a quandary: What should be highlighted, softened or even ignored? News organizations were quick to remember Prince Philip's long marriage to Queen Elizabeth II and decades of public service. But any character flaws or mistakes, including past public racist comments, were diminished. CNN's coverage on April 9 provides a good example of this softened approach. "The duke," it noted, "was known for off-the-cuff remarks that often displayed a quick wit but occasionally missed the mark, sometimes in spectacular fashion." The Associated Press made more direct mention of Philip's racist comments – but found itself under attack online and from other parts of the media as a result. It later modified the language in the obit, changing "occasionally racist and sexist remarks" to "occasionally deeply offensive remarks." Obituaries for former presidents, entertainers and athletes offer example after example of selective memory. Negativity is taboo, even in obits written by journalists about public figures. Most people don't like to speak ill of the dead. As a scholar of journalism history and public memory, I examined more than 8,000 newspaper obituaries from 1818 to 1930 to see what they reveal about American culture. An obit is a news report of a death, but it also offers a tiny summary of what people want to remember about a life. For most of us that memory usually represents an ideal – we tend to filter out unpleasant aspects or episodes. Taken collectively and over time, obituaries tell us much about what – and who – society values. A life in printOccasionally one can be brutally honest, such as the obituary published in 2018 in the Redwood Falls Gazette for a Minnesota woman who evidently was not well loved by members of her own family. According to the memorial, the woman would "face judgment" for abandoning her children. The newspaper removed the obituary from its website after public criticism that it went too far. But for the most part, they focus on the positive. Nineteenth-century obituaries celebrated people for attributes of character. Men were remembered for patriotism, gallantry, vigilance, boldness and honesty. Women's obits recorded entirely different qualities: patience, resignation, obedience, affection, amiability and piety. Sarah English, a wife and mother who died in 1818, was "as intelligent as she was good." "Not at all ambitious of worldly show, she chose to be useful rather than gay. Her domestic concerns were managed with the most admirable economy exhibiting at the same time a degree of comfort and neatness not to be surpassed," according to 19th-century newspaper The National Intelligencer. The 1838 obituary for 50-year-old Virginian William P. Custis told readers of the same newspaper, "There is in the life of a noble, independent and honest man, something so worthy of imitation, something that so strongly commends itself to the approbation of a virtuous mind, that his name should not be left in oblivion, nor his influence be lost." Not everyone was remembered. Silences on obituary pages can be as telling as what was published. The few obituaries for African Americans or Native Americans in the obits I looked at were included mostly when they died in an unusual or mysterious way, lived to be 100 or served the dominant culture. For example, "a respectable colored man named Thomas Henry Songan," a 32-year-old ship steward, "fell to the floor a corpse," the New York Daily Times wrote in 1855. The obit for Chocktaw Chief Minto Mushulatubbee, who died in 1838, assured readers that "he was a strong friend of the whites until the day of his death." Obituaries in the early 20th century tended not to focus on attributes of character. Rather, they reflected an industrial society that valued profit and production. Men were noted for professional accomplishments, wealth, long years at work, university education or being well known and prominent. Women were remembered for their associations with successful men, social prominence and wealth. The New York Times in 1910 recorded one woman's death this way: "Mrs. Albert E. Plant, whose husband is the first cousin of the late Henry B. Plant, the railroad and steamship owner, was killed this morning by the express train from New York City." Headline after headline in news reports of someone's death and in the obituary pages mourned a man's "Career Cut Short," even for deceased male children. Portraits of griefObituaries also revealed what Americans thought about death. In the early 19th century, illnesses were "endured with Christian patience," the deceased "ready and willing to obey the summons of her God." By the 1850s, the language became more sensational. The deceased were "removed by the Omnipotent Author," "scathed by the wing of the destroying angel," or "paled by the mighty Death King." That language all but disappeared after the Civil War. After so much death, it became unpatriotic to dwell on it. [Over 100,000 readers rely on The Conversation's newsletter to understand the world. Sign up today.\ Obituaries offer a window into what we value. The New York Times' beautiful "Portraits of Grief" tribute to the victims of the terrorist attacks of September 11, 2001, portrayed active people in the prime of life, with robust careers and family lives. COVID-19 brings a new collective focus on death, and obituaries for its victims, I believe, will be just as revealing. But be it a royal dying of old age or a grocery worker whose life was cut short by disease, one thing is likely: The words accompanying the death will focus more on the positive. Janice Hume does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment. |

| Posted: 12 Apr 2021 05:26 AM PDT  If one thing is clear about remote work, it's this: Many people prefer it and don't want their bosses to take it away. When the pandemic forced office employees into lockdown and cut them off from spending in-person time with their colleagues, they almost immediately realized that they favor remote work over their traditional office routines and norms. As remote workers of all ages contemplate their futures – and as some offices and schools start to reopen – many Americans are asking hard questions about whether they wish to return to their old lives, and what they're willing to sacrifice or endure in the years to come. Even before the pandemic, there were people asking whether office life jibed with their aspirations. We spent years studying "digital nomads" – workers who had left behind their homes, cities and most of their possessions to embark on what they call "location independent" lives. Our research taught us several important lessons about the conditions that push workers away from offices and major metropolitan areas, pulling them toward new lifestyles. Legions of people now have the chance to reinvent their relationship to their work in much the same way. Big-city bait and switchMost digital nomads started out excited to work in career-track jobs for prestigious employers. Moving to cities like New York and London, they wanted to spend their free time meeting new people, going to museums and trying out new restaurants. But then came the burnout. Although these cities certainly host institutions that can inspire creativity and cultivate new relationships, digital nomads rarely had time to take advantage of them. Instead, high cost of living, time constraints and work demands contributed to an oppressive culture of materialism and workaholism. Pauline, 28, who worked in advertising helping large corporate clients to develop brand identities through music, likened city life for professionals in her peer group to a "hamster wheel." (The names used in this article are pseudonyms, as required by research protocol.) "The thing about New York is it's kind of like the battle of the busiest," she said. "It's like, 'Oh, you're so busy? No, I'm so busy.'" Most of the digital nomads we studied had been lured into what urbanist Richard Florida termed "creative class" jobs – positions in design, tech, marketing and entertainment. They assumed this work would prove fulfilling enough to offset what they sacrificed in terms of time spent on social and creative pursuits. Yet these digital nomads told us that their jobs were far less interesting and creative than they had been led to expect. Worse, their employers continued to demand that they be "all in" for work – and accept the controlling aspects of office life without providing the development, mentorship or meaningful work they felt they had been promised. As they looked to the future, they saw only more of the same. Ellie, 33, a former business journalist who is now a freelance writer and entrepreneur, told us: "A lot of people don't have positive role models at work, so then it's sort of like 'Why am I climbing the ladder to try and get this job? This doesn't seem like a good way to spend the next twenty years.'" By their late 20s to early 30s, digital nomads were actively researching ways to leave their career-track jobs in top-tier global cities. Looking for a fresh startAlthough they left some of the world's most glamorous cities, the digital nomads we studied were not homesteaders working from the wilderness; they needed access to the conveniences of contemporary life in order to be productive. Looking abroad, they quickly learned that places like Bali in Indonesia, and Chiang Mai in Thailand had the necessary infrastructure to support them at a fraction of the cost of their former lives. With more and more companies now offering employees the choice to work remotely, there's no reason to think digital nomads have to travel to southeast Asia – or even leave the United States – to transform their work lives.  During the pandemic, some people have already migrated away from the nation's most expensive real estate markets to smaller cities and towns to be closer to nature or family. Many of these places still possess vibrant local cultures. As commutes to work disappear from daily life, such moves could leave remote workers with more available income and more free time. [You're smart and curious about the world. So are The Conversation's authors and editors. You can get our highlights each weekend.] The digital nomads we studied often used savings in time and money to try new things, like exploring side hustles. One recent study even found, somewhat paradoxically, that the sense of empowerment that came from embarking on a side hustle actually improved performance in workers' primary jobs. The future of work, while not entirely remote, will undoubtedly offer more remote options to many more workers. Although some business leaders are still reluctant to accept their employees' desire to leave the office behind, local governments are embracing the trend, with several U.S. cities and states – along with countries around the world – developing plans to attract remote workers. This migration, whether domestic or international, has the potential to enrich communities and cultivate more satisfying work lives. The authors do not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and have disclosed no relevant affiliations beyond their academic appointment. |

| Posted: 12 Apr 2021 05:26 AM PDT  Major League Baseball knows how to exert leverage over local lawmakers. Over 100 companies, including Delta Air Lines and Coca-Cola, reacted to Georgia's new restrictive voting law by publicly denouncing it. While some executives are discussing doing more – such as halting donations or delaying investments, MLB is among the few organizations to go beyond words: It immediately said it was going to move the 2021 All-Star Game from Atlanta to Denver. Both MLB's decision to relocate the July 13 game and the many corporate press releases issued about the voting law drew a swift rebuke from Republicans, who vowed boycotts of baseball and the products these companies produce. The Senate minority leader even threatened retribution if companies didn't stay out of politics – with an exception for campaign contributions. As a corporate governance scholar, I have studied how corporations use their economic power to get what they want from lawmakers. I believe Republicans' angry reactions signal just how deeply concerned they are that other companies might follow MLB's lead. The nature of corporate powerTo help understand why, consider this: MLB's decision is estimated to cost Georgia as much as US$100 million in lost economic activity. Corporations understand that the jobs and tax revenue they can provide – or withhold – give them power at the negotiating table. Other states are all competing for the same investments. Tesla, for example, agreed to build a factory near Reno, Nevada, in 2014 in exchange for $1.4 billion in state benefits after a bidding war. National Football League teams have been especially ruthless in their negotiations with cities and states and have demanded hefty taxpayer subsidies for new stadiums. By threatening to move to another city, team owners can extract hundreds of millions of dollars in new benefits. The dynamic is easy to understand. State lawmakers usually cater to corporations because they want to attract business investment and keep it. When corporations leave, they can cause property values to stagnate and tax revenue to plunge – as happened to Hartford, Connecticut, a few years ago after several large insurance companies abandoned the city. How corporations use their leverage is up to them. They can seek to feed their bottom lines or to advance social causes. Traditionally it's the former. For example, many U.S. companies lobbied for a $1 trillion corporate tax cut in 2017. But increasingly it's the latter too. A rise in corporate social activismIn 2015, the threat of corporate boycotts caused then-Gov. Mike Pence to support changing an Indiana law that would otherwise have allowed anti-gay discrimination in the name of religious freedom. Something similar happened in 2016 when Georgia's governor bowed to corporate pressure and vetoed a bill that would have legalized discrimination against same-sex couples on religious grounds. And again in 2017, North Carolina partially repealed a law that targeted transgender people over concerns that boycotts – such as by PayPal, the NCAA and former Beatle Ringo Starr – would cost the state $3.76 billion over a dozen years. Those boycotts, of course, did not end efforts to restrict LGBTQ rights at the state level, but they demonstrated that when corporations band together, they are capable of exerting enormous economic and political pressure to advance social causes. And that possibility is likely on the minds of Georgia lawmakers following the MLB's All-Star Game decision.  Words and deedsDespite the apparent leverage companies yield, it's not simple for most companies to just get up and leave. For example, Delta – whose largest hub is in Atlanta – benefits from a tax break on jet fuel. And Coca-Cola's ties to Georgia are deep and long-standing, dating back to a soda fountain in Atlanta in 1886. Companies don't sever such ties or give up generous tax breaks easily – and neither Delta nor Coke has even suggested that it might. But if the many companies that publicly objected to the law want to have an impact on policy – and see the law changed or repealed – money has to be at stake, as I learned in my own research on how North Carolina changed its 2015 law only after companies began boycotting the state. Delta and Coca-Cola employ thousands of people and generate billions of dollars in economic activity in the state. That's serious leverage they could use if they felt the voting rights issue was important enough. Words and press releases alone usually aren't enough. [Over 100,000 readers rely on The Conversation's newsletter to understand the world. Sign up today.] Making a differenceUltimately, this threat of lost business is what makes corporations a formidable adversary. The question, then, is what it would take for them to leave Georgia. Without knowing MLB's internal deliberations, I cannot say why the league dropped Atlanta with so little hesitation, but there are some likely possibilities. First, just as a matter of timing, MLB may have been concerned about holding the All-Star game in the midst of a political controversy, drawing unfavorable attention, especially in light of its own recent commitment to have zero tolerance when faced with racial injustice. MLB may have also taken an opportunity to show solidarity with its players, given the high-profile advocacy for social causes of many professional athletes. Research suggests that employee diversity is an important consideration for corporations on matters of social justice. Finally, just as a practical matter, moving the All-Star game may have offered MLB some public relations benefits at relatively low cost to itself. And those same reasons are likely why other sports leagues – such as the NCAA in North Carolina and the NFL with the 2016 Georgia bill – are often out front on these types of social issues. Georgia should not count on any backlash subsiding soon; the NCAA withheld championship games from South Carolina for 15 years until the state removed the Confederate flag from the Statehouse grounds. For now, MLB's decision has not prompted the kind of mass corporate revolt that could force change. On April 12, Will Smith's production company said it was pulling its upcoming slavery-era drama, "Emancipation," out of Georgia because of the voting law. But it's unclear, in particular, whether any Georgia-based corporations will follow MLB's lead by removing business operations from the state. The voting law that passed is actually less restrictive than earlier versions of the bill, suggesting that criticism – including from companies – likely had some impact. Lawmakers may have made some changes precisely to avoid sparking a stronger corporate response. But if companies like Delta and Coca-Cola really want to make a difference and use their leverage on this issue, they will need to go beyond words. Their actions would speak much louder. Article updated on April 12 to add references to production of "Emancipation" being moved out of Georgia. Benjamin Means does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment. |

| You are subscribed to email updates from Home – The Conversation. To stop receiving these emails, you may unsubscribe now. | Email delivery powered by Google |

| Google, 1600 Amphitheatre Parkway, Mountain View, CA 94043, United States | |

No comments:

Post a Comment